The interaction module is meant as an additional device for Automated Guided Vehicles (AGVs). The module recognizes human poses by machine learning and interprets the intentions of the human(s). Semester 6 student of ICT Technology developed an interaction module in first half of 2019. The studentproject builded on research done on pose recognition and interpreting human intentions during the TEC Smart Predictable Robots project.

The interaction module is meant to be applicable on an Automated Guided Vehicle (AGV) to detect, avoid and interact with a human. The module is mounted on the AGV and should be capable to detect humans in the area surrounding the AGV (min 1 meter, max 100 meter). The interaction module does also provide feedback to its environment about its own (the AGV) status.

The current implementation is able to recognize three human gestures. The module provides feedback with its eyes: in a

The interaction module works autonomously from the AGV, but should interact fast with the AGV. It provides a signal to the AGV about the humans in its environment. The interaction module determines autonomously, so apart from the AGV logic, if the moving AGV should avoid a human or if e.g., driving slower, driving backwards or changing direction, also taking speed direction of the AGV into account. So the interaction is an extra steering device on top of the AGV.

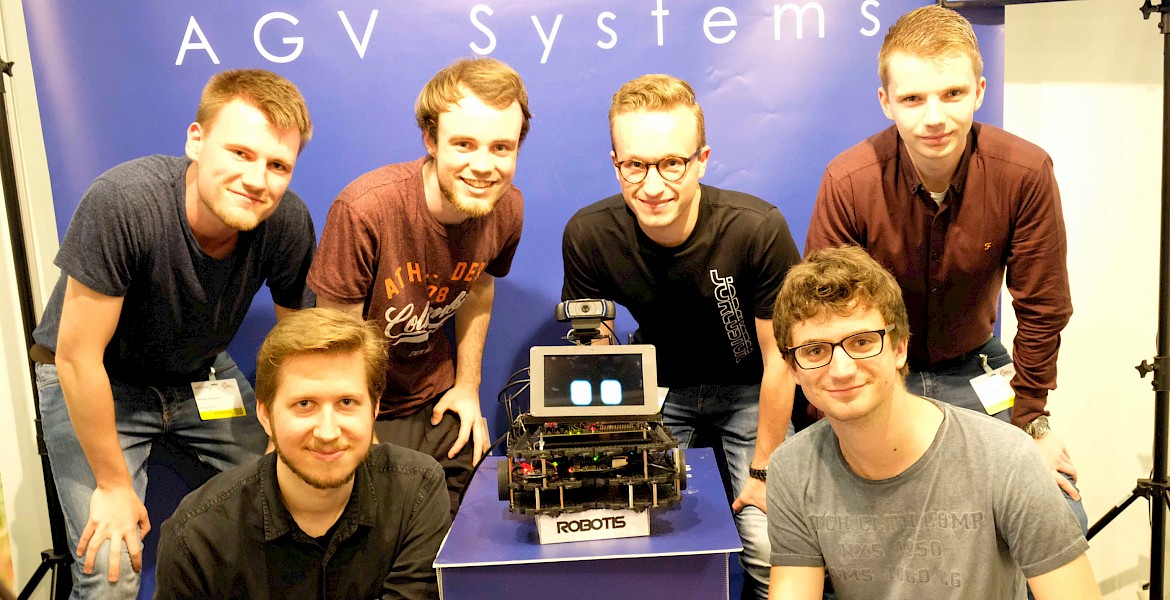

Student projects

- Spring 2019 - Semester 6 ICT & Technology - integration vision and ML on working prototype

- Spring 2020 - Semester 6 ICT & Technology - improving the gesture set for the module